Meet your customers where they are

Engage customers on their terms—meet them in the messaging channels they use every day.

Engage customers on their terms—meet them in the messaging channels they use every day.

Trusted by the Fortune 100 to improve customer and agent experiences while reducing costs and increasing revenue. We help leading brands empower agents, prevent fraud, and deliver superior experiences across any or all moments of the customer journey.

Delivering the omnichannel experiences customers expect requires an AI‑first approach. It’s only by combining automated and human engagement that you can achieve the best business outcomes.

Use AI to automate 80% + of all engagements

Bridge AI automation and human engagements

Empower agents and create an AI learning loop

Instill customer trust with biometric security

Nuance omnichannel customer engagement solutions bring the power of conversational AI to the contact center and beyond.

Nuance Contact Center AI adds intelligence to your contact center platform through powerful AI services and developer tools, helping you enhance experiences, reduce costs, and increase operational efficiency.

Nuance digital and messaging solutions enable you to meet customers in their channel of choice, providing seamless experiences that increase satisfaction while driving efficiencies and increased revenue.

Nuance voice solutions delight customers while driving down costs by providing conversational, automated experiences that contain calls in the IVR and accelerate resolution.

Nuance biometric authentication and intelligent fraud prevention solutions streamline, protect, and personalize every customer interaction.

Nuance analytics solutions automatically capture and analyze all omnichannel customer engagements to provide insights that help you revolutionize contact center performance.

Our cloud solutions integrate with leading Contact Center as a Service (CCaaS) vendors, cloud providers, and technology partners.

Our 700+ AI experts are always on hand if, and when, you need them—experienced in highly‑specialized disciplines not easily found in‑house or on the market today.

Our intelligent engagement and security solutions help enterprises worldwide—including 75 of the Fortune 100—achieve remarkable business results.

increase in agent + employee satisfaction

CSAT increase

increase in NPS

automated first contact resolution

fraud prevention savings

annual savings

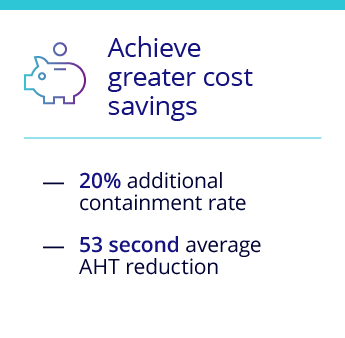

AHT reduction

average containment rate

conversion rate

improvement in upsells

improvement in new sales

ROI from reduced fraud-related losses

Delivering superior outcomes across any or all moments of a customer's journey

Nuance digital, voice, and biometric security solutions are proven to help brands increase the quality of customer experiences—and the value of customer relationships.

Our flexible deployment and partnering approach gives you total control over your AI transformation. You can do it yourself, tap into our expertise when you need it, or rely on us from end to end.

Customers who embrace an AI-first approach across the entire customer journey...

NLU intent recognition

biometric authentication

success rate

detection of fraud attempts

Nuance earns 2021 highest rating for enterprise intelligent assistants

Nuance named 2020-21 leader with strong enterprise execution and advanced NLU

Nuance recognized as a 2020 Leader in digital-first customer service solutions

Nuance awarded 2020 most innovative biometrics vendor